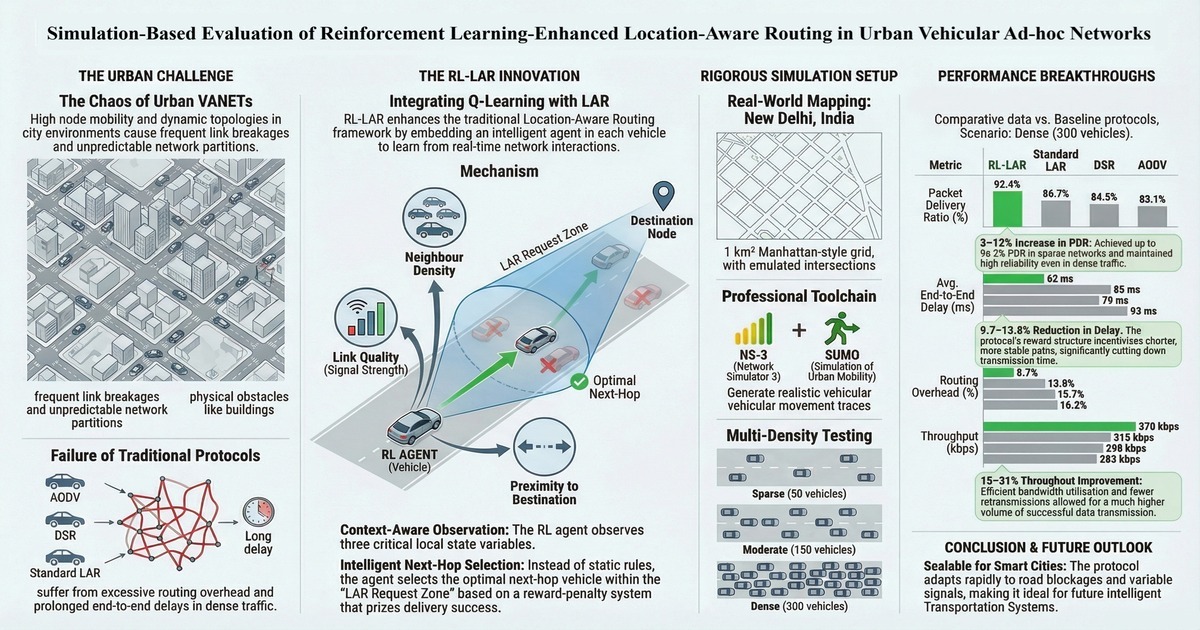

Simulation-Based Evaluation of Reinforcement Learning-Enhanced Location-Aware Routing in Urban Vehicular Ad-hoc Networks (VANETs)

DOI:

https://doi.org/10.59796/jcst.V16N2.2026.180Keywords:

location-aware routing, ns3 simulation, reinforcement learning, routing protocols, urban mobility, vehicular ad hoc networksAbstract

Vehicular Ad Hoc Networks (VANETs) need robust routing protocols to ensure rapid and reliable data transmission in urban environments characterized by high mobility and highly dynamic topologies. Traditional routing protocols lead to excessive routing overhead, increased hop counts, prolonged end-to-end delays, and reduced packet delivery ratios (PDR), which collectively hinder reliable and efficient data dissemination within intelligent transportation systems (ITS). This research presents a simulation-based evaluation of a Reinforcement Learning (RL)-enhanced Location-Aware Routing (LAR) protocol. By integrating RL with the traditional LAR protocol, the proposed framework dynamically adapts to network fluctuations, thereby minimizing routing overhead, hop counts, and end-to-end delay. Compared against classical routing protocols such as AODV, DSR, and LAR across sparse (50 vehicles), moderate (150), and dense (300) urban traffic scenarios using NS-3 and SUMO, RL-LAR demonstrates superior performance. Improvements ranging from 3% to 12% were observed in PDR, while average end-to-end delay was reduced by 9.7% to 13.8%. Additionally, routing overhead decreased by 4.3% to 8.7%, hop counts were reduced by 15% to 23% and throughput increased by 15% to 31% relative to baseline protocols. These gains were also validated by ANOVA (p < 0.01) and found to be suitable for routing in smart cities for future intelligent transportation systems.

References

Alqubaysi, T., Asmari, A. F. A., Alanazi, F., Almutairi, A., & Armghan, A. (2025). Federated learning-based predictive traffic management using a contained privacy-preserving scheme for autonomous vehicles. Sensors, 25(4), Article 1116. https://doi.org/10.3390/s25041116

Bartwal, H., Sivaraman, H., & Kumar, J. (2025). A cluster-based trusted secure multipath routing protocol for mobile ad hoc networks. International Journal of Computer Networks & Communications, 17(3), 89–109. https://doi.org/10.5121/ijcnc.2025.17306

Clausen, T., & Jacquet, P. (2003). Optimized Link State Routing Protocol (OLSR) RFC 3626. Network Working Group. Internet Engineering Task Force (IETF). https://doi.org/10.17487/rfc3626

Devi, Y. S., & Roopa, M. (2024). Performance analysis of routing protocols in vehicular ad hoc networks [Conference presentation]. Evolution in Signal Processing and Telecommunication Networks. ICMEET 2023. Lecture Notes in Electrical Engineering, Singapore. https://doi.org/10.1007/978-981-97-0644-0_36

Faisal, S. M. (2024). Blockchain-enabled security model for VANETs: A novel approach to authentication and position. Research Square. https://doi.org/10.21203/rs.3.rs-4859344/v1

Hu, H., & Lee, M. J. (2022). Graph neural network-based clustering enhancement in VANET for cooperative driving [Conference presentation]. 2022 International Conference on Artificial Intelligence in Information and Communication (ICAIIC). IEEE, Jeju Island, Korea. https://doi.org/10.1109/ICAIIC54071.2022.9722625

Hunt, N., Fulton, N., Magliacane, S., Hoang, T. N., Das, S., & Solar-Lezama, A. (2021). Verifiably safe exploration for end-to-end reinforcement learning [Conference presentation]. Proceedings of the 24th International Conference on Hybrid Systems: Computation and Control, New York, US. https://doi.org/10.1145/3447928.3456653

Husain, A., & Sharma, S. C. (2018). Implementation of geographical location based routing protocols in vehicular environment. International Journal of System Assurance Engineering and Management, 9(1), 18-25. https://doi.org/10.1007/s13198-016-0425-3

Johnson, D. B., Maltz, D. A., & Broch, J. (2001). DSR: The dynamic source routing protocol for multi-hop wireless ad hoc networks. Ad Hoc Networking, 5(1), 139-172.

Karp, B., & Kung, H. T. (2000). GPSR: Greedy perimeter stateless routing for wireless networks [Conference presentation]. Proceedings of the 6th annual international conference on Mobile computing and networking. New York, US. https://doi.org/10.1145/345910.345953

Khan, M. U., Hosseinzadeh, M., & Mosavi, A. (2022). An intersection-based routing scheme using Q-learning in vehicular ad hoc networks for traffic management in the intelligent transportation system. Mathematics, 10(20), Article 3731. https://doi.org/10.3390/math10203731

Ko, Y., & Vaidya, N. H. (2000). Location-aided routing (LAR) in mobile ad hoc networks. Wireless Networks, 6(4), 307–321. https://doi.org/10.1023/a:1019106118419

Kumar, A., Dixit, P., & Tyagi, S. S. (2023). Particle swarm optimization for efficient data dissemination in VANETs [Conference presentation]. International Conference on Artificial-Business Analytics, Quantum and Machine Learning. Springer Nature Singapore, Singapore. https://doi.org/10.1007/978-981-97-2508-3_4

Lansky, J., Rahmani, A. M., & Hosseinzadeh, M. (2022). Reinforcement learning-based routing protocols in vehicular ad hoc networks for intelligent transport system (ITS): A survey. Mathematics, 10(24), Article 4673. https://doi.org/10.3390/math10244673

Lee, S., Oh, J., Kim, M., Lim, M., Yun, K., Yun, H., Kim, C., & Lee, J. (2024). A study on reducing traffic congestion in the roadside unit for autonomous vehicles using BSM and PVD. World Electric Vehicle Journal, 15(3), Article 117. https://doi.org/10.3390/wevj15030117

Li, R., Li, F., Li, X., & Wang, Y. (2014). QGrid: Q-learning based routing protocol for vehicular ad hoc networks [Conference presentation]. 2014 IEEE 33rd international performance computing and communications conference (IPCCC). IEEE, Austin, TX, USA. https://doi.org/10.1109/PCCC.2014.7017079

Mnih, V., Kavukcuoglu, K., Silver, D., Rusu, A. A., Veness, J., Bellemare, M. G., ... & Hassabis, D. (2015). Human-level control through deep reinforcement learning. Nature, 518(7540), 529-533. https://doi.org/10.1038/nature14236

Perkins, C., & Royer, E. (1999). Ad-hoc on-demand distance vector routing [Conference presentation]. IEEE Workshop on Mobile Computing Systems and Applications (WMCSA). New Orleans, LA, USA. https://doi.org/10.1109/MCSA.1999.749281

Priya, K. C., Sharma, S., Kumar, M. S., Gangwar, P. K., Aarthi, R., & Kumar, R. S. (2024). Optimizing urban mobility in smart cities through deep learning-based traffic management [Conference presentation]. 2024 15th International Conference on Computing Communication and Networking Technologies (ICCCNT). IEEE, Kamand, India. https://doi.org/10.1109/ICCCNT61001.2024.10724627

Rajesh, P., Shajin, F. H., Ansal, V., & Kumar, B. V. (2023). Enhanced artificial transgender longicorn algorithm and recurrent neural network–based enhanced DC–DC converter for torque ripple minimization of BLDC motor. Journal of Current Science and Technology, 13(2), 182–204. https://doi.org/10.59796/jcst.V13N2.2023.1735

Rao, P. S., Polisetty, V. R. M., Jayanth, K. K., Manoj, N. S., Mohith, V., & Kumar, R. P. (2024). Deep adaptive algorithms for local urban traffic control: Deep reinforcement learning with DQN [Conference presentation]. 2024 2nd International Conference on Intelligent Data Communication Technologies and Internet of Things (IDCIoT). IEEE, Bengaluru, India. https://doi.org/10.1109/IDCIoT59759.2024.10467630

Sankar, P., Joel, M. R., & Husain, A. J. (2023). DIB-a novel optimized VANET traffic management using a deep neural network. International Journal on Recent and Innovation Trends in Computing and Communication, 11(10s), 484–491. https://doi.org/10.17762/ijritcc.v11i10s.7657

Sun, Y., Lin, Y., & Tang, Y. (2018). A reinforcement learning-based routing protocol in VANETs [Conference presentation]. International Conference in Communications, Signal Processing, and Systems. Springer Singapore, Singapore. https://doi.org/10.1007/978-981-10-6571-2_303

Suwodjo, R. A., & Ibrahim, Z. B. (2024). Modeling of an adaptive HHO gas controller based on fuzzy logic and polynomial function controls to improve engine torque of gasoline engine. Journal of Current Science and Technology, 14(3), Article 72. https://doi.org/10.59796/jcst.V14N3.2024.72

Trinh, H. T., Bae, S. H., & Tran, D. Q. (2024). Combining multi-agent deep deterministic policy gradient and rerouting technique to improve traffic network performance under mixed traffic conditions. SIMULATION, 100(10), 1033-1051. https://doi.org/10.1177/00375497241237831

Van Otterlo, M., & Wiering, M. (2012). Reinforcement learning and Markov decision processes. Adaptation, Learning, and Optimization. Berlin, Germany. https://doi.org/10.1007/978-3-642-27645-3_1

Wu, J., Fang, M., Li, H., & Li, X. (2020). RSU-assisted traffic-aware routing based on reinforcement learning for urban vanets. IEEE Access, 8, 5733-5748. https://doi.org/10.1109/ACCESS.2020.2963850

Wu, Z. (2023). Design of dynamic traffic information prediction system based on multi-source information fusion [Conference presentation]. 2023 2nd International Conference on Artificial Intelligence and Intelligent Information Processing (AIIIP). IEEE, Hangzhou, China. https://doi.org/10.1109/AIIIP61647.2023.00020

Yang, Q., & Yoo, S. J. (2022). Grouped intersection-based routing using reinforcement learning for urban VANETs [Conference presentation]. 2022 13th international conference on information and communication technology convergence (ICTC). IEEE, Jeju Island, Korea. https://doi.org/10.1109/ICTC55196.2022.9952627

Zhang, W., Yang, X., Song, Q., & Zhao, L. (2021). V2V routing in VANET based on fuzzy logic and reinforcement learning. International Journal of Computers Communications & Control, 16(1). Article 4123. https://doi.org/10.15837/ijccc.2021.1.4123

Downloads

Published

How to Cite

Issue

Section

Categories

License

Copyright (c) 2026 Journal of Current Science and Technology

This work is licensed under a Creative Commons Attribution-NonCommercial-NoDerivatives 4.0 International License.